The Dream Team

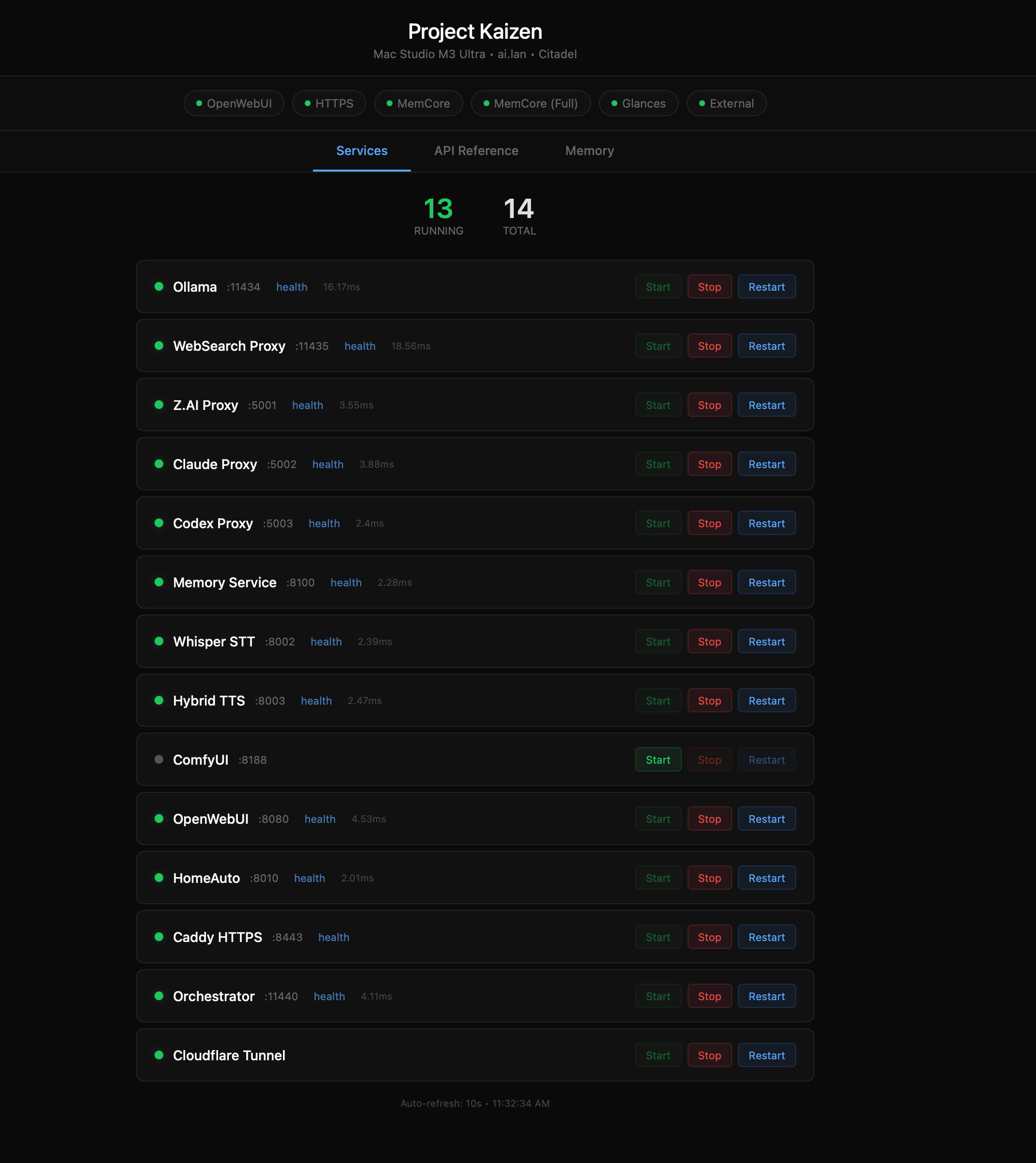

13 Active Services. One Unified Intelligence.

Every service runs natively on a single Mac Studio M3 Ultra — 512GB unified memory, 80 GPU cores, and 819 GB/s memory bandwidth. No Docker. No cloud inference. No data leaves the network. Powered by Ollama v0.17.5 with native Metal GPU acceleration.

System Architecture

Single-Node Native Architecture (Ollama v0.17.5)

The backbone of Project Kaizen is Ollama v0.17.5 running natively on macOS with Metal GPU acceleration. All services run as native processes — no Docker, no containers. The qwen35moe architecture support (added in v0.17.4) enables running 397-billion parameter Mixture-of-Experts models locally.

How it works:

- Ollama manages model loading and inference with Metal GPU acceleration

- 512GB unified memory holds the full 189GB Qwen3.5-397B model in RAM

- Mixture-of-Experts architecture activates only 17B of 397B parameters per token

- All services orchestrated via

kaizen.shwith health monitoring - The stack presents OpenAI-compatible API endpoints for all consumers

Ollama Endpoint: http://ai.lan:11434/v1

Service Orchestration

All services run natively on macOS, managed by the Orchestrator. Each service exposes a FastAPI or Flask endpoint on a dedicated port.

| Service | Port | Description |

|---|---|---|

| Ollama | 11434 | LLM inference engine (v0.17.5, qwen35moe) |

| WebSearch Proxy | 11435 | Search, memory, and context injection |

| Orchestrator | 11440 | Dashboard API, memory proxy, service control |

| Whisper STT | 8002 | MLX Speech-to-Text |

| Hybrid TTS | 8003 | Kokoro + Piper voice synthesis |

| OpenWebUI | 8080 | Web chat interface (v0.8.1) |

| Memory Service | 8100 | Mem0 + ChromaDB personal memory |

| Z.AI Proxy | 5001 | GLM-5, GLM-4.7, GLM-4.6 cloud models |

| Claude Proxy | 5002 | Claude Opus 4.6, Sonnet 4.6, Haiku 4.5 |

| Codex Proxy | 5003 | GPT-5.2 Codex, GPT-5.1 Codex Max |

| Caddy | 8443 | HTTPS reverse proxy |

| Cloudflare Tunnel | — | Secure external access |

| Glances | 61208 | System monitoring |

Memory Architecture

Models are loaded into unified GPU memory by Ollama on demand. The M3 Ultra's memory controller handles efficient allocation across the 80 GPU cores.

| Component | Allocation |

|---|---|

| max:deep / max:think (Qwen3.5-397B-A17B) | ~189 GB |

| max:voice (Qwen3.5-35B-A3B) | ~23 GB |

| max:mem (qwen3:8b) — fact extraction | ~5 GB |

| mxbai-embed-large — embeddings (1024-dim) | ~0.7 GB |

| Voice STT (MLX Whisper Large-v3 Turbo) | ~0.8 GB |

| Voice TTS (Kokoro-82M) | ~0.5 GB |

| ChromaDB (vector storage) | Variable |

| System & Services | ~40 GB |

Agent Roster

Agent 001 — AuralCraft Planned

Role: Advanced audio synthesis and music generation Model: Stable Audio Open / MusicGen Memory: ~12 GB

Generative audio agent capable of creating music tracks, sound effects, and ambient soundscapes from text prompts. Designed to integrate with the voice pipeline for real-time audio augmentation and notification sounds throughout the Kaizen ecosystem.

Capabilities:

- Text-to-music generation with genre/mood control

- Sound effect synthesis from descriptions

- Audio style transfer and remix

- Real-time ambient soundtrack generation

Agent 002 — WebSearchPro Active

Role: Intelligent web search with multi-provider fallback Stack: FastAPI + SerpAPI + Brave + Google PSE Port: 11435

Production search proxy (v1.3.0) that queries up to three search providers in sequence, deduplicates results, and injects structured knowledge graph data. Returns clean, LLM-optimized search summaries. Also provides weather via OpenWeather API, sports scores, and US metric enforcement.

Capabilities:

- 3-provider cascade: SerpAPI → Brave API → Google PSE

- Knowledge Graph, Sports Scores, Related Questions extraction

- OpenWeather API integration for weather queries

- US metric enforcement (USD, Fahrenheit, miles, feet, pounds)

- Voice acknowledgment via TTS ("One moment, I'm checking that for you")

- Conditional web search (only triggers when current info needed)

- Standalone

/v1/searchAPI for cloud AI proxies (Claude, Codex, Z.AI) - Model-specific system prompts via

model_router

3-Step Context Injection:

Identity Enforcement → Conditional Hardware Injection → Personal Memory (Mem0)

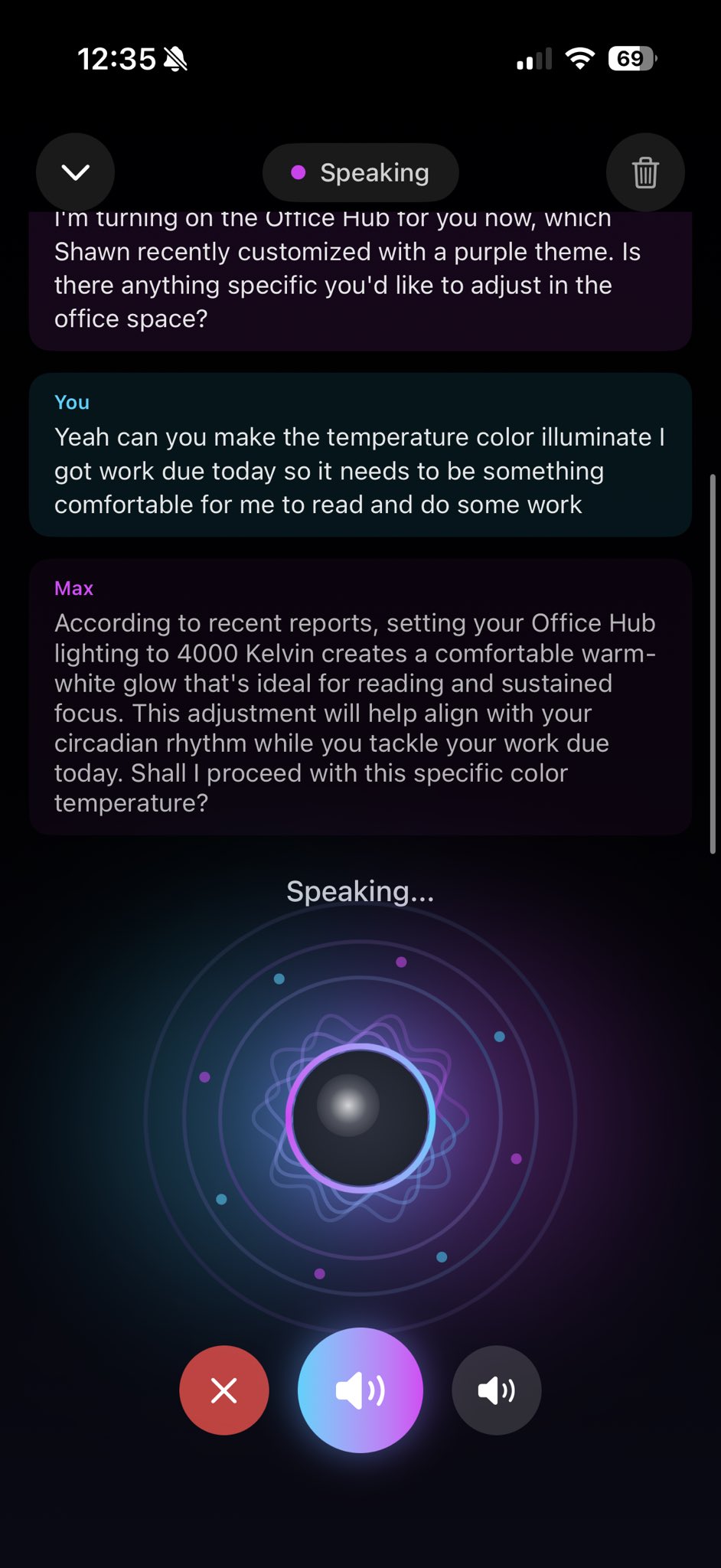

Agent 003 — VoiceAgent Active

Role: Real-time bidirectional voice interaction Models: MLX Whisper Large-v3 Turbo (STT) + Kokoro-82M (TTS) Ports: 8002 (STT) / 8003 (TTS) Latency: ~200-400ms STT, ~300-600ms TTS

The voice pipeline enables natural spoken conversations with Max. Audio streams in, gets transcribed by Whisper running natively on MLX, routed to the primary LLM for response generation, then synthesized back to speech via Kokoro TTS with Piper fallback.

Capabilities:

- Streaming speech-to-text with MLX acceleration

- 10 TTS voices: 8 Kokoro neural + 2 Piper fallback (24kHz output)

- 10-stage text normalization (strips think blocks, markdown, code, HTML)

- Voice Activity Detection for natural turn-taking

- Conversation context maintained via Memory Service

Pipeline:

Mic → Whisper STT → LLM → Kokoro TTS → Speaker

Agent 004 — MyDatingWingman Planned

Role: Social dynamics and conversation coaching Model: Primary LLM with specialized system prompt

Context-aware social assistant that analyzes conversation patterns, suggests openers, and provides real-time coaching for social interactions.

Capabilities:

- Conversation analysis and suggestion generation

- Response timing and tone recommendations

- Social pattern recognition

Agent 005 — MemoryCore Active

Role: Centralized long-term memory and context retention Stack: Mem0 + ChromaDB + mxbai-embed-large (1024-dim) Port: 8100 Fact Extraction: max:mem (qwen3:8b)

The shared memory backbone for the entire Kaizen ecosystem. Every conversation, decision, and learned preference gets embedded and stored in ChromaDB vector database. The WebSearch proxy injects relevant memories into every request. Identity queries expand to 25 memories for rich personal context.

Capabilities:

- Semantic vector search over conversation history

- Automatic memory extraction via max:mem (qwen3:8b)

- Trigger phrases: "remember that...", "add to memory:", "don't forget..."

- MemCore Web UI at

/dashboard/memoryvia Orchestrator - Memory injection into all LLM requests via WebSearch proxy

- Cross-session memory persistence

Architecture:

Conversation → max:mem Extraction → mxbai-embed-large Embedding → ChromaDB Store → Semantic Retrieval → Context Injection

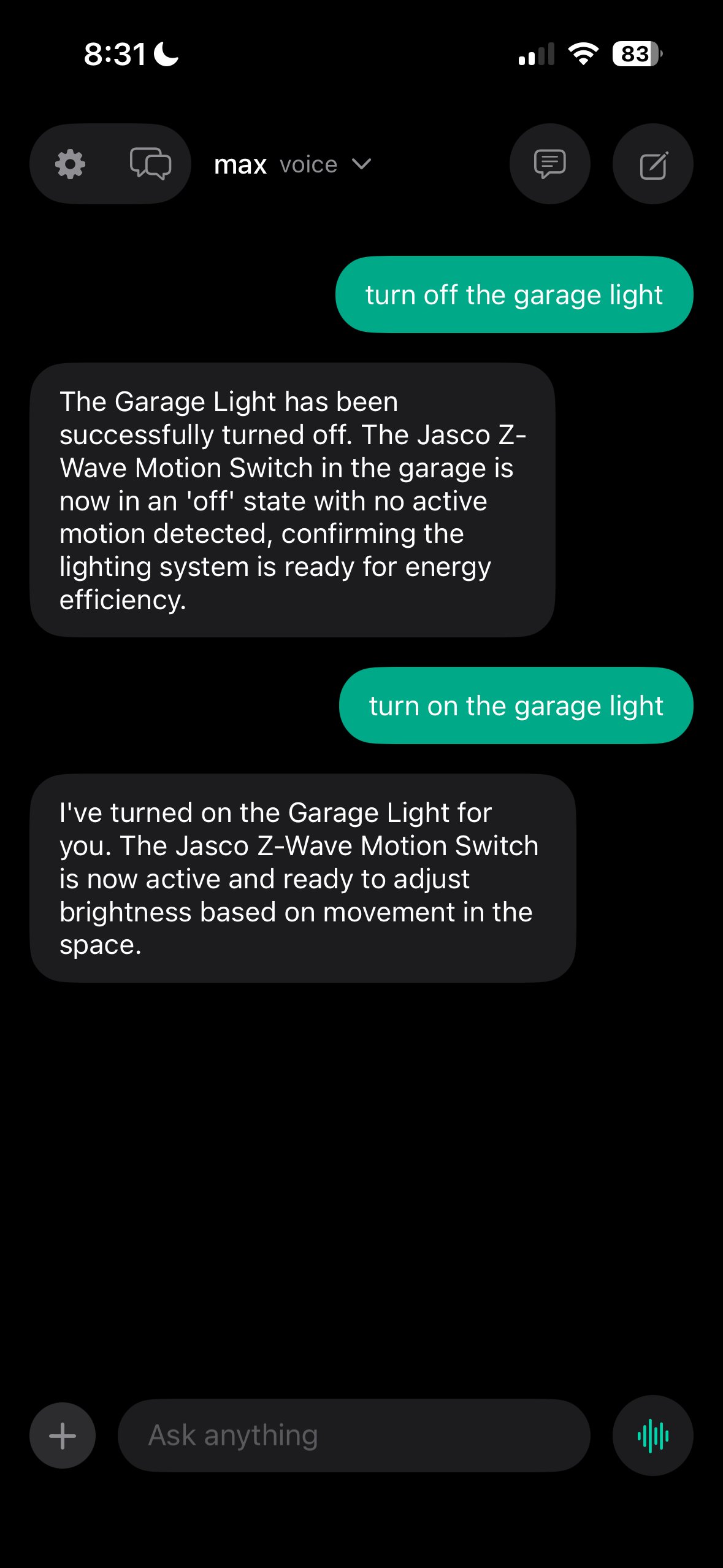

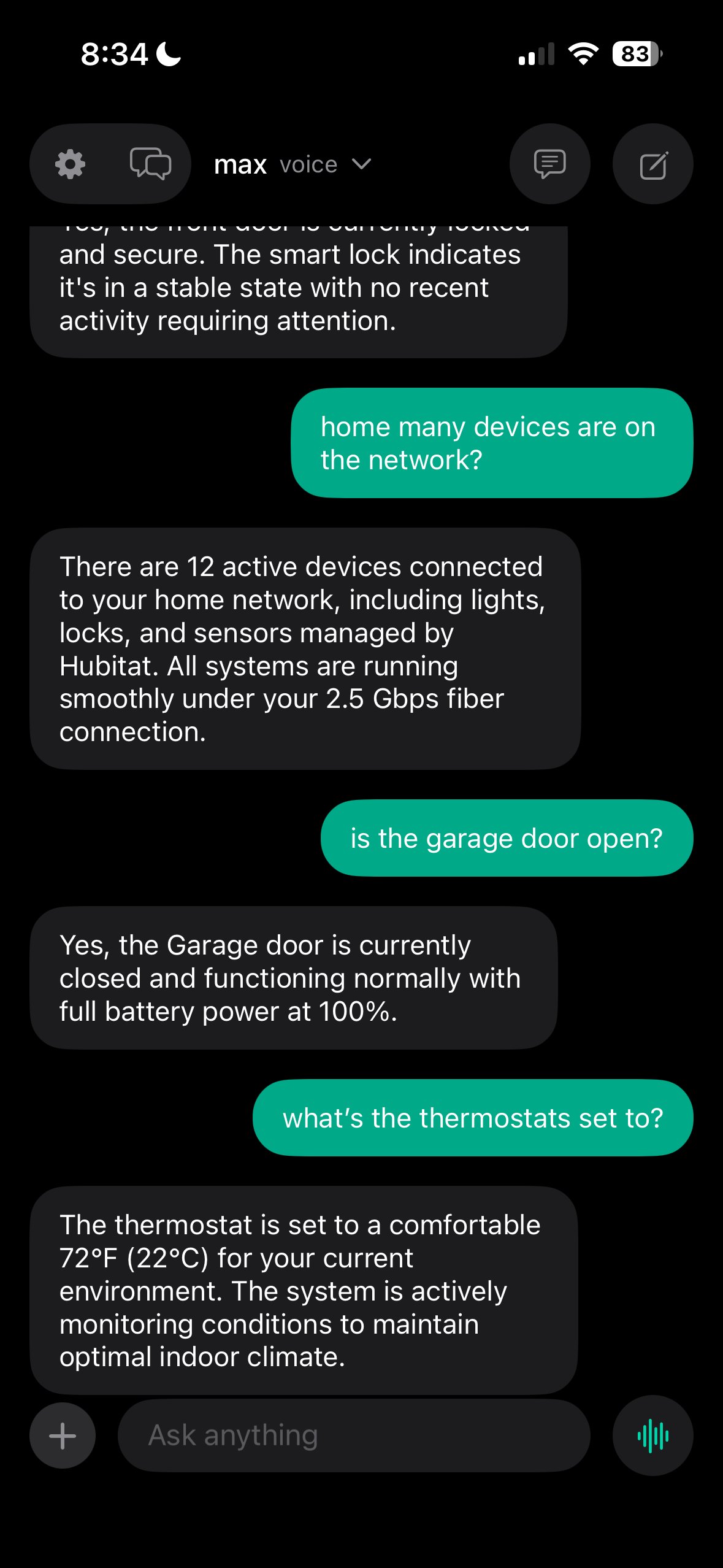

Agent 006 — HomeAuto Active

Role: Smart home orchestration and environment control Integration: Hubitat Maker API + UniFi Protect + Homebridge

LLM-driven home automation agent that goes far beyond simple rules. An open-weight model running locally on the M3 Ultra reasons about real-world events — camera motion, presence detection, lock state changes — and decides whether to act, monitor, or ignore based on full contextual awareness.

Capabilities:

- LLM-driven intent detection on sensor/camera events

- Voice-controlled lighting, locks, climate, and security via Hubitat

- Contextual reasoning (time-of-day, presence patterns, behavioral norms)

- UniFi Protect camera integration for visual event classification

- Automated HSM arming/disarming tied to lock and presence events

- TTS confirmation loop for safety-critical actions

- Vector memory retrieval before every reasoning step

Pipeline:

Event Ingestion → Vector Memory Lookup → Constrained Reasoning → Intent Classification → Tool Selection → Maker API Execution → TTS Confirmation

Deep Dive: Full Architecture Breakdown

See the complete orchestration pipeline, intent detection logic, security model, and integration map for HomeAuto.

Agent 007 — NetGuardian Planned

Role: Network security monitoring and intrusion prevention Integration: UniFi Dream Machine SE + Suricata

Active network security agent that monitors traffic patterns, detects anomalies, and can trigger automated firewall rules via the UniFi API. Maintains a threat intelligence feed and correlates events across network segments.

Capabilities:

- Real-time traffic anomaly detection

- Automated firewall rule generation via UniFi API

- Threat intelligence feed integration

- Port scan and brute force detection

- Security event correlation and alerting

- VPN tunnel monitoring

Agent 008 — NetScope Planned

Role: Network analytics and traffic optimization Integration: UniFi DPI + ntopng

Deep packet inspection and traffic analysis agent focused on network performance optimization. Monitors bandwidth utilization, identifies bottlenecks, and provides actionable recommendations for network configuration.

Capabilities:

- Per-device bandwidth monitoring and historical trends

- Application-level traffic classification

- Latency and jitter analysis per network segment

- DNS query analytics and optimization

- QoS policy recommendations

- Network topology visualization

Agent 009 — KMS In Progress

Role: Credential and secret management Stack: HashiCorp Vault (dev mode) + age encryption

Centralized key management for all Kaizen services. Stores API keys, service credentials, certificates, and encryption keys. All agents authenticate with KMS before accessing external services.

Capabilities:

- Encrypted credential storage with audit logging

- Automatic key rotation on configurable schedules

- Per-agent access policies and scoping

- Certificate generation and renewal

- Secret injection into services at runtime

Agent 010 — AdminAI Planned

Role: System administration and maintenance automation Integration: macOS system calls + service management

Automated sysadmin that handles routine maintenance: service health checks, log rotation, backup scheduling, disk usage monitoring, and OS updates. Can be triggered by schedule or by other agents when issues are detected.

Capabilities:

- Service health monitoring and auto-restart

- Automated backup to local NAS (TimeMachine + rsync)

- Disk space monitoring with cleanup recommendations

- Service dependency tracking and restart ordering

- System update scheduling and rollback

Agent 011 — StableDiffusion Planned

Role: High-quality image generation Models: Flux.1-dev + SDXL Turbo

Local image generation using state-of-the-art diffusion models optimized for Apple Silicon via MLX.

Capabilities:

- Text-to-image with Flux.1-dev (high quality)

- Rapid preview generation with SDXL Turbo

- Image-to-image transformation and inpainting

- ControlNet pose/depth/edge guidance

- Style transfer and consistent character generation

Agent 012 — VideoGen Planned

Role: Text-to-video generation Model: Mochi Preview

Video generation agent leveraging the Mochi model for creating short video clips from text descriptions. Designed for content creation, visualization, and creative experimentation.

Capabilities:

- Text-to-video generation (up to 10s clips)

- Frame interpolation and slow motion

- Video style transfer

- Storyboard-to-video pipeline

Agent 013 — SystemAdmin Active

Role: Core infrastructure management and orchestration Stack: FastAPI + Prometheus + kaizen.sh Port: 11440

The master orchestrator that manages all other services. Handles service discovery, health monitoring, request routing, and resource allocation. Maintains the service registry and ensures the stack operates within memory and compute budgets.

Capabilities:

- Service lifecycle management (start/stop/restart)

- Request routing and health check monitoring

- Resource usage tracking (GPU memory, CPU, bandwidth)

- Prometheus metrics export for Grafana dashboards

- Memory API proxy for external dashboard access

- Same-origin design for HTTPS and external access

Data Flow Architecture

┌─────────────────────────────────────────────────┐

│ User Input │

│ (Voice / Web UI / API / iOS App) │

└──────────────────────┬──────────────────────────┘

│

▼

┌────────────────┐

│ Orchestrator │ :11440

│ (SystemAdmin) │

└───────┬────────┘

│

┌─────────────┼─────────────┐

▼ ▼ ▼

┌─────────┐ ┌──────────┐ ┌──────────┐

│ Voice │ │ Search │ │ Memory │

│8002/8003│ │ :11435 │ │ :8100 │

└────┬────┘ └────┬─────┘ └────┬─────┘

│ │ │

└─────────────┼─────────────┘

│

▼

┌─────────────────┐

│ Ollama │ :11434

│ (M3 Ultra) │

│ 512GB Unified │

└─────────────────┘All inference requests ultimately flow to Ollama on port 11434. The WebSearch proxy enriches queries with search results, personal memory from Mem0, and conditional hardware context before forwarding to Ollama for model inference.

Performance Benchmarks

| Metric | Target | Current |

|---|---|---|

| max:voice throughput | > 30 tok/s | 42.9 tok/s |

| max:deep throughput | > 15 tok/s | 17.6 tok/s |

| max:think throughput | > 15 tok/s | 17.5 tok/s |

| Voice STT latency | < 400ms | ~200-400ms |

| Voice TTS latency | < 600ms | ~300-600ms |

| Search query response | < 2s | ~1.2s |

| Memory retrieval | < 100ms | ~60ms |