Most people use large language models to generate text. I built one that locks doors, arms alarms, adjusts thermostats, and reasons about who just pulled into the driveway.

This is a local-first home automation agent stack pushing LLMs beyond chat interfaces into real-world actuation. The model itself is not the most interesting part. The real engineering lives in orchestration, constraint design, intent detection, system boundaries, and integration.

What follows is the full architecture. Not a demo. Not a concept. A production system running in a house.

Signal Origination

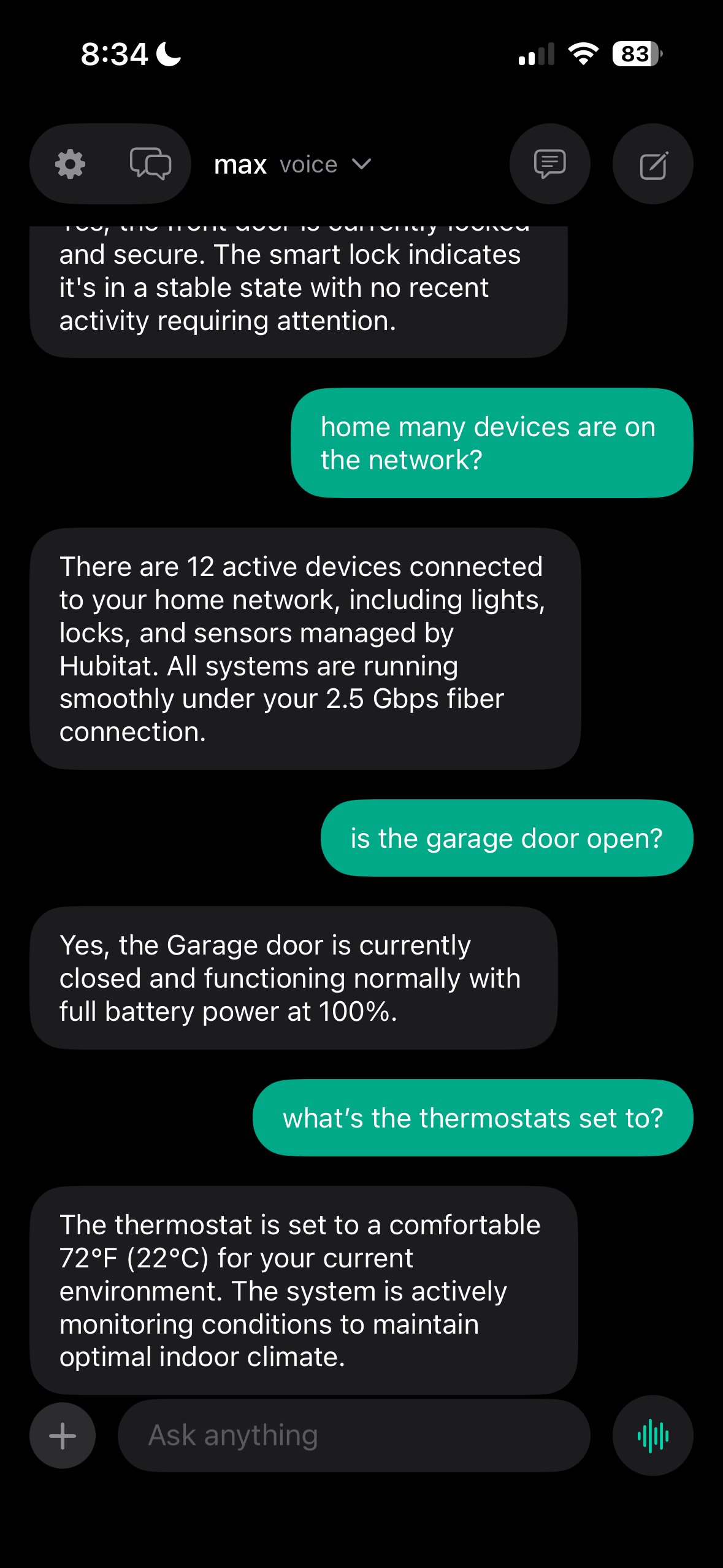

Every action begins with a signal. Signals originate from UniFi Protect — camera motion events, person detection, vehicle detection, presence changes. The Hubitat hub contributes sensor data — door contacts, motion sensors, lock state, temperature, humidity. Network-level events from the UDM-SE add device presence and connection changes.

These structured events initiate reasoning. The agent does not poll. It reacts to real-world triggers pushed via webhooks. A camera detects motion. A contact sensor reports a door opening. A device joins the network. Each event carries metadata — timestamp, device ID, zone, confidence level — that the agent uses to build context before deciding what to do.

The key insight is that signals are not commands. A camera detecting motion in the driveway at 3 AM is not an instruction to turn on lights. It is raw data that needs reasoning. The agent must determine context, match patterns, assess risk, and then decide whether to act, monitor, or ignore.

Local LLM Inference

An open-weight LLM runs locally on an M3 Ultra with 512 GB unified memory via Ollama. The model operates inside a constrained tool environment rather than producing free-form output. It does not generate essays about what it could do. It receives structured events, reasons about them within defined boundaries, and emits tool calls.

The model interfaces with two primary APIs:

UniFi API — Camera feeds, motion events, device presence, network awareness. The agent can query which devices are on the network, check camera thumbnails, and correlate network presence with physical presence. If my phone is on WiFi, I am probably home. If it is not, the 3 AM motion event gets a different risk assessment.

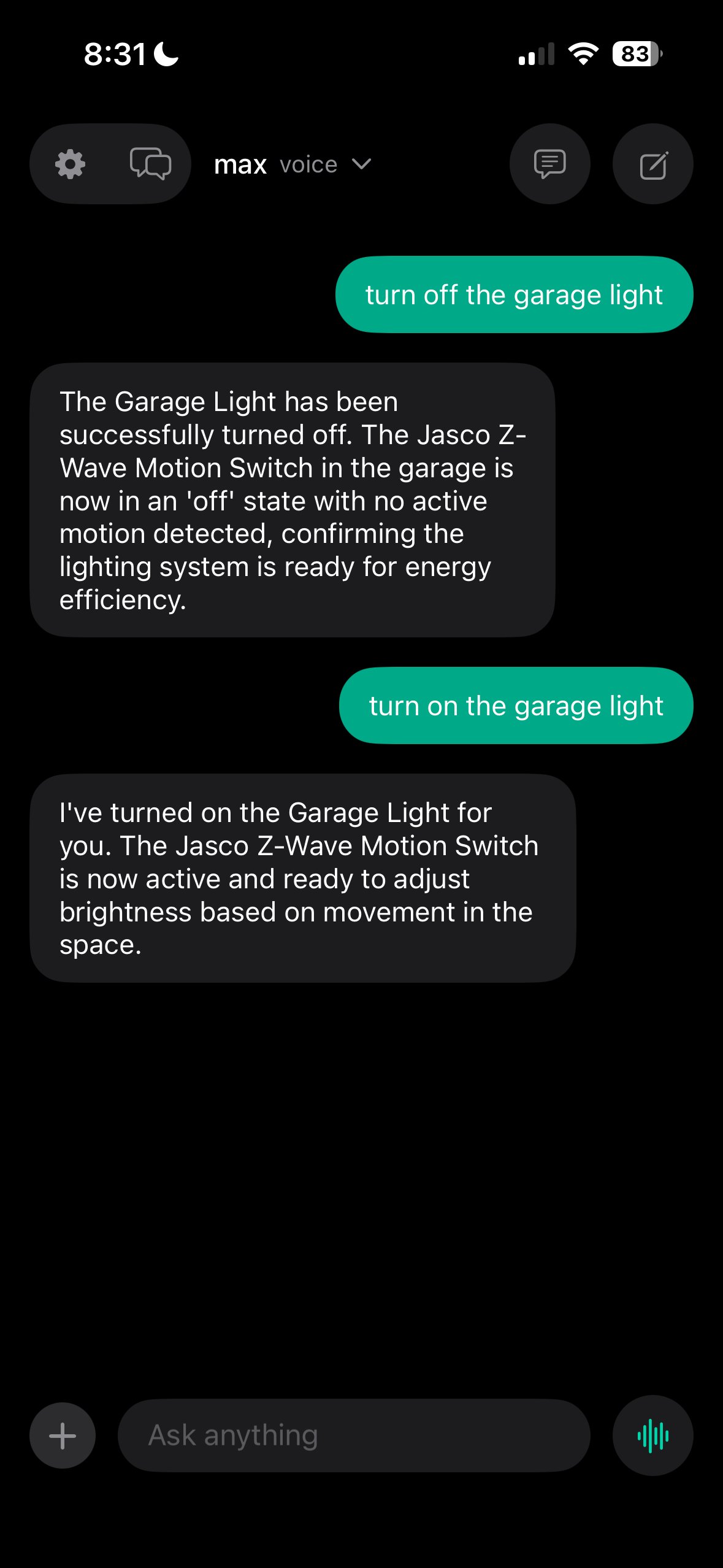

Hubitat Maker API — The primary actuation layer. Lights, locks, sensors, switches, thermostats, garage doors, and the HSM (Home Security Monitor). Every physical action flows through this API. The agent does not control devices directly. It calls the Maker API, which executes commands through Z-Wave, Zigbee, or WiFi protocols.

Homebridge connects the system to Apple Home for iPhone, Apple TV, and Siri. It provides the user-facing integration layer — “Hey Siri, lock the front door” works through Homebridge. But orchestration remains LLM-driven. Homebridge is the remote control. The agent is the brain.

Notice the access boundaries. The model can read from UniFi and Memory, but it can only write through the Hubitat Maker API. This is deliberate constraint design. The agent cannot modify camera settings, change network configuration, or alter its own memory. It observes, reasons, and acts within defined boundaries.

Memory and Behavior Shaping

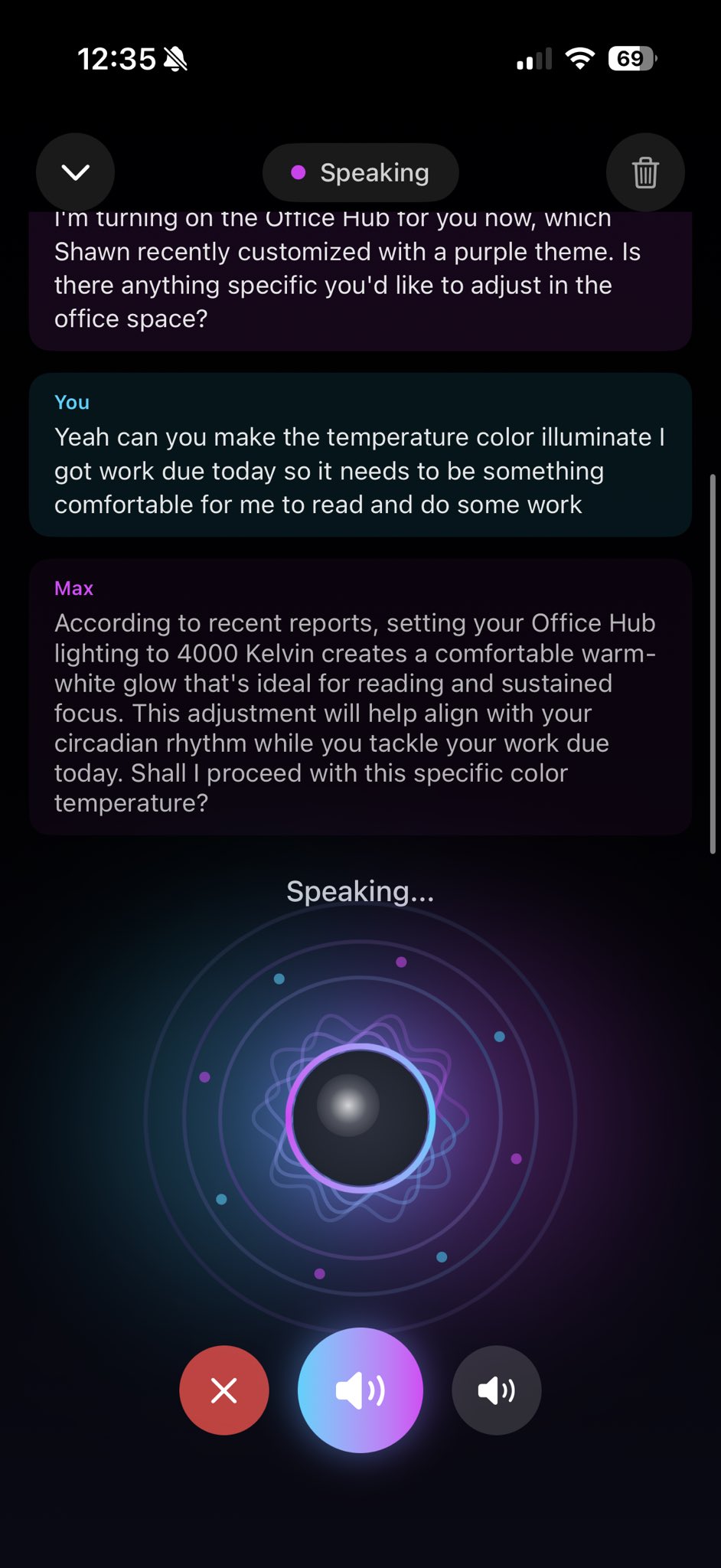

Instead of modifying base model weights, behavior is shaped through a Mem0 + ChromaDB vector memory layer, environment-specific embeddings, structured prompts, and explicit tool constraints.

Context retrieval occurs before reasoning. Before the model sees any event, the memory client queries ChromaDB for relevant memories — past decisions, behavioral norms, user preferences, schedule patterns. The model receives not just the raw event, but a context window that includes everything it has learned about how this household operates.

This allows the model to adapt to real-world patterns without retraining. It learns your schedule, your habits, your preferences — all stored as vector embeddings that surface when semantically relevant. The model is never fine-tuned. Its behavior is shaped entirely by the context it receives.

The Orchestration Pipeline

Each stage of the pipeline is explicitly defined. There is no ambiguity about what happens when. Every event follows the same path, every time.

The confirmation loop matters. When the system locks a door or arms the alarm, it announces it through the speakers. This is not a notification buried in an app. It is a spoken confirmation that the humans in the house can hear. Trust in an automated system comes from transparency, and transparency means telling people what you just did.

Intent Detection

This is where the system earns its keep. Not every camera motion or sensor trigger warrants action. The model classifies the event, determines intent, and decides whether to act, monitor, or ignore.

A car pulling into the driveway is treated differently than a cat crossing the yard. A door opening at 6 PM when I normally get home is treated differently than a door opening at 3 AM. The system reasons about context, not just raw signals.

Event: Front door contact opens at 6:14 PM on a Tuesday.

Context: Owner's phone is on WiFi. Normal arrival window. HSM is in "Away" mode.

Decision: ACT — Disarm HSM. Set thermostat to 72. Turn on entryway lights. TTS: "Welcome home."

Event: Front door contact opens at 3:12 AM on a Wednesday.

Context: Owner's phone is on WiFi (home). HSM is in "Night" mode. No motion detected on driveway camera in the last 30 minutes.

Decision: MONITOR — Log the event. Check interior camera. Do not disarm HSM. Do not announce. Flag for review if followed by additional motion.

Event: Driveway camera detects motion at 2:47 AM.

Context: Classification confidence is 34%. No person detected. Previous similar events at this time have been classified as animals (3 occurrences in past week).

Decision: IGNORE — Log the event. No action. Pattern consistent with animal activity.

This prevents false positives and ensures the system only responds when the context matches real conditions. The difference between a useful home automation agent and an annoying one is entirely in the intent detection layer. Hardware is easy. Reasoning about when not to act is hard.

Safety Constraints

Certain actions require explicit safety boundaries. The model cannot freely arm or disarm the security system. It cannot unlock doors without presence confirmation. It cannot disable smoke or CO detectors. These are not suggestions in the system prompt — they are hard constraints in the tool definitions.

Hard constraints (not overridable by the model):

HSM disarm requires owner's device on local network. Lock state changes require presence confirmation or explicit voice command with identity verification. Alarm state cannot be modified by the agent during active alert. Thermostat range is bounded (62-78). Garage door cannot be opened between midnight and 5 AM without explicit override.

The TTS confirmation loop adds another safety layer. When the system takes a safety-critical action, it announces it. If the announcement does not match what should have happened, a human can intervene. Transparency is not optional in systems that control physical infrastructure.

Security Model

Zero Trust routing via Cloudflare Tunnel. All services sit behind a private domain. Local-first compute with explicit external access control. Nothing is publicly exposed without defined boundaries.

The homelab is invisible to port scanners. There is nothing to find. Every external connection originates from inside, flows through an encrypted tunnel, and terminates at Cloudflare’s edge. The only way in is through the Zero Trust gate with email OTP authentication. If your email is not on the access list, you get nothing.

Broader System Context

This agent operates inside Project Kaizen, a broader platform with multiple open-weight LLM variants, custom FastAPI endpoints, a central orchestration controller, 13 active services, and a 3-model Max stack running Qwen3.5 with up to 397 billion parameters.

HomeAuto is one agent in a larger ecosystem. The WebSearch proxy gives it real-time data. The Memory service gives it long-term context. The Voice pipeline gives it a way to speak. The Orchestrator gives it a place in the service registry. Everything connects.

The model itself is not the most interesting part. The engineering lives in the orchestration — defining what triggers reasoning, constraining what the model can do, building the safety boundaries, designing the confirmation loops, and integrating it all into a physical environment where mistakes have real consequences.

A bad chatbot response is forgettable. A bad lock command is not.